HHS study harnesses AI to explore giving a ‘voice’ to kids’ mental health

HHS’ Dr. Laura Duncan is leading a study using AI to analyze the voices of teenagers in a McMaster Children’s Hospital program to better understand their mental-health needs.

What’s in a voice? It depends on what can be heard, says Dr. Laura Duncan of Hamilton Health Sciences (HHS), who is leading a study using artificial intelligence (AI) to analyze the voices of teenagers in a McMaster Children’s Hospital (MCH) program to better understand their mental health needs.

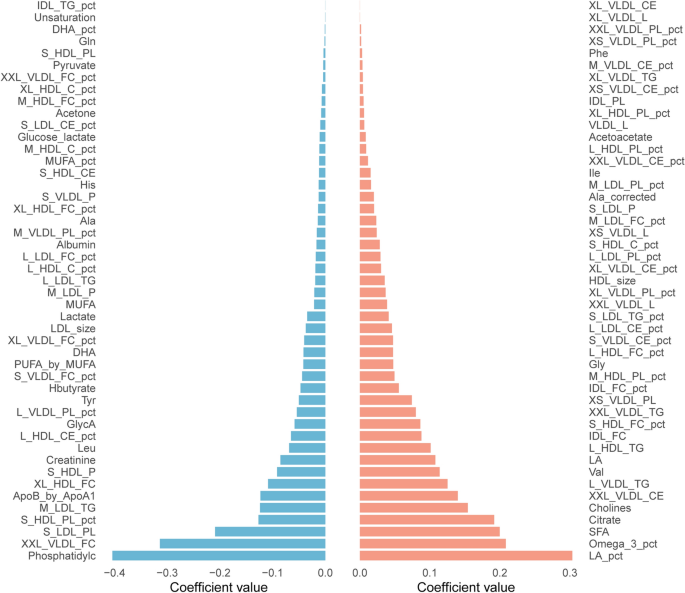

While human listeners can sense tone, emotion, and context in a person’s voice, machine learning ‘listens’ very differently, by converting speech into data, searching for patterns, and detecting tiny, nuanced changes in pitch, volume, speed, and tone that humans can’t pick up.

Out of earshot

AI interprets vocal patterns very differently than human listeners, says Duncan, the research and information systems lead for MCH’s Child and Youth Mental Health Program (CYMHP). This program collects and analyzes data to better understand young people’s mental health needs and evaluate how well services are working. It also develops tools to assess children and youth, identify trends and gaps in care, and improve services. Duncan’s also a researcher with the Offord Centre for Child Studies, a research institute affiliated with HHS and McMaster University.

For six months, teenage patients in our Child and Youth Mental Health Program used their phone or computer to record themselves describing pictures and reading chapters of a story provided by the study, and shared voice recordings for AI voice analysis.

Research using electronic information collected by the program includes deep learning, an advanced form of AI that automatically finds patterns in very large or complex data, like images, text and speech.

A recent project recruited 50 CYMHP patients, ages 12 to 17, to better understand their mental health needs using AI voice analysis. For six months, these patients used their phone or computer to record themselves describing pictures and reading chapters of a story provided by the study, and shared voice recordings for AI voice analysis. As part of this project they also filled out daily sleep and mood ratings over the six month period.

Yuxiang (Bryce) Lei, a McMaster University master’s student with the Offord Centre, demonstrates how teenage patients used their phone or computer to record themselves describing pictures and reading chapters of a story provided by the study.

While human listeners can sense tone, emotion, and context in a person’s voice, machine learning ‘listens’ very differently, by converting speech into data, searching for patterns, and detecting tiny, nuanced changes in pitch, volume, speed, and tone that humans can’t pick up.

“We’re using AI to explore whether indicators in patients’ speech can tell us something about symptom trajectories over the course of their treatment,” says Duncan, adding that such insights can help health-care teams better understand patients’ needs and plan the best and most appropriate treatments.

Next steps

“Our data engineers are currently going through the speech recordings to identify key details that will help us turn the data into models we can work with,” says Duncan, who expects to be ready to share findings as early as this spring.

The voice study is part of an AI deep learning project called SMARTmind: Advancing Mental Health with AI, which launched about five years ago and is funded in part by the Juravinski Research Institute. Duncan is a co‑principal investigator along with child psychologist Dr. Paulo Pires and Dr. Roberto Sassi, a former HHS child psychiatrist now at BC Children’s Hospital.

SMARTmind uses advanced computer tools to study large sets of connected health data, looking for clues that can help predict how often people may need hospital services in the future and how serious those visits might be.

The system builds on online measuring tools already in place to assess and monitor kids’ mental health, such as the Mental Health Questionnaire for Children and Youth (MHQ-CY). Duncan helped create and test the MHQ-CY, an online intake assessment tool, which has become the hub of a community-wide information system.

The MHQ-CY includes an online set of questions that young patients, caregivers, or family members fill out to describe symptoms, concerns and strengths. Such background information helps the care team understand what the young person is experiencing, decide what kind of support or service is needed and ensure that care is consistent across different providers. It also helps organizations better plan and improve mental health services by showing them what needs are most common in the community.

“We have this incredible database that we can apply machine learning to, especially deep learning, to explore new ways of advancing research,” says Duncan, explaining how the MHQ-CY contributes to AI learning.

As advancements in technology continue to propel research forward, Duncan looks forward to seeing improvements in connectivity between local and provincial organizations supporting child and youth mental health.

“Implementing an integrated, province-wide system would provide the foundation for AI to really grow in its effectiveness,” says Duncan.

Related Posts

link